'AIaaS' and the enhancement of Information Systems Auditing

The proposal to adopt Artificial

Intelligence (possibly ‘as a Service’) within the

context of Information

Security, corporate governance and compliance

is part of a modern and proactive vision of Risk

Management.

The underlying idea is to use artificial intelligence

not merely as an automation tool, but as a true enabler of

a new operational model: more agile, flexible and

effective in preventing and counteracting deviations in

industrial and organizational processes.

In particular,

the integration of AI makes it possible to move from a

traditionally reactive approach to a predictive one, capable

of intercepting deviations, anomalies and critical behaviors

that would normally escape verification methods based on

sampling or manual controls.

AI is employed to support assessments,

strengthen risk management, optimize Remediation

Plans and assist process audits within the key

functions of the Organization

(Compliance, Security, Risk Management, Revenue

Assurance), ensuring broader and more effective

coverage of control activities.

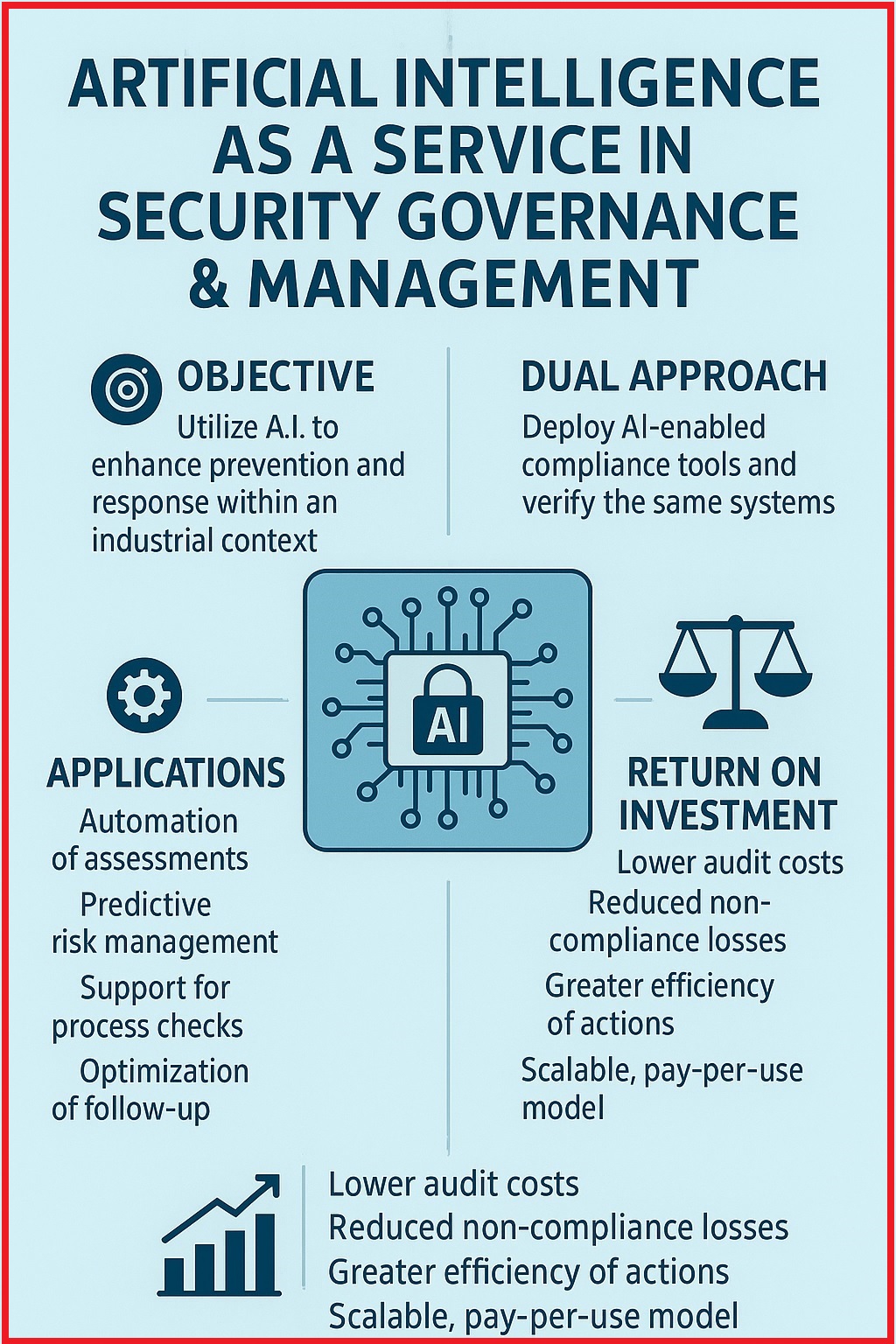

A distinctive element of the proposal is its dual

approach:

- on the one hand, the deployment of ‘AI-enabled’ devices and software to consolidate compliance oversight;

- on the other hand, the development of innovative methods and tools to verify and ensure the ethical and regulatory compliance of the artificial intelligence systems themselves. This latter axis directly addresses European Union guidelines on trustworthy AI audits and aims to strengthen user trust in technologies that, to be effective, must also be transparent, controllable and designed according to durable ethical principles.

The adoption of this approach, combined with an ‘as a Service’ capability for Security Governance, delivers significant economic benefits even in the short term:

- Reduction of operational costs: automation of assessments and predictive analyses can reduce the time devoted to manual audit activities, freeing professional resources for higher value-added tasks.

- Lower losses due to Non-Compliance: timely prevention of anomalies and compliance violations reduces potential penalties and remediation costs, resulting in significant savings compared to traditional sampling-based approaches.

- Efficiency of Remediation Plans: optimization of follow-up activities (which determine whether Management has adopted appropriate corrective measures to address identified deficiencies) and action plans leads to shorter resolution times for non-conformities, with a corresponding strengthening of business continuity.

- Scalability and pay-per-use: the AIaaS model would allow costs and resources to be adjusted, avoiding high upfront investments and transforming expenditure into an operating cost aligned with actual usage.

Auditing activity should no longer be conceived as a linear, static and predominantly ‘ex post’ process. On the contrary, its methodological value emerges with particular clarity through a paradigm configured as a ‘double-feedback logical circuit’ (counter-reacted control, represented by the ‘self-reinforcement with self-balancing’ scheme), in which the following coexist:

- an internal operational cycle, oriented toward control, monitoring and verification of compliance and security parameters. Therefore: detection, alerting, assisted intervention;

- an external learning cycle, devoted to the review of rules, policies, risk assumptions and decision criteria that guide the entire system. Therefore: aggregated analysis, model validation, rule updating;

allowing the calibration not only of parameters

but also of the rules that govern control processes.

Within this architecture, AIaaS provides the necessary support

for this dual circuit to operate in a continuous, scalable

and sustainable manner.

Artificial Intelligence services, delivered as shared

and modular capabilities, indeed make it possible to:

- collect and correlate weak signals coming from heterogeneous domains (technical, organizational, behavioral);

- transform large volumes of raw data into interpretable indicators, useful both for operational (immediate) control and for strategic reflection;

- support adaptive decision-making processes, while keeping the auditor and management within the responsibility loop (‘human-in-the-loop’).

- compliance is not a static attribute, but a condition to be maintained over time;

- risk is not only to

be mitigated, but to be understood and

anticipated;

- control is not limited to verification, but includes the ability to critically review the rules that govern it.

- Explainability and ‘auditability by

design’ – Every output entering the decision

perimeter must be accompanied by clear and traceable

artifacts/evidence (relevant features, model version,

reference dataset,

timestamp,

digital

signature), so as to enable ‘ex post’ validation by

the auditor and protect professional accountability.

- Dynamic risk map as an operational dashboard – The proposed dynamic map constitutes the “single source of truth” for AIaaS: it visualizes deviations between expected and observed states across operational, regulatory and reputational dimensions, guiding action selection in the (internal) operational cycle and strategic updates in the external cycle.

- Continuous validation and 'bias'

management (cognitive distortions) –

AIaaS services shall include structured validation

procedures (retrospective verification on historical data,

cross-validation, prior predictive checking) and fairness

metrics, together with monitoring policies and mitigation

plans for each identified “drift.”

- Phased approach and risk minimization

– Rollout (deployment) should begin with limited scopes

and non-sensitive or pseudonymized data,

progressively extending only after verifying MTTD

(MeanTimeToDetect), MTTR

(MeanTimeToRespond), detection rate and operational

cost/benefit.

Just as in the martial analogy effective control arises from dynamic balance and the ability to adapt to imbalance, likewise modern auditing can benefit from tools that enable:

- early interception of

deviations and vulnerabilities;

- progressive calibration

of responses;

- adaptation of policies, controls and interpretative models to a changing context.

The adoption of AIaaS in auditing implies the clear definition of roles, scopes of use, model validation criteria and output interpretation modalities, so that Artificial Intelligence remains a reliable support tool and not an opaque source of uncontrolled automatisms.

In

conclusion, the integration between AIaaS and Information

Systems Auditing, viewed through the lens of the

double-feedback model, makes it possible to outline a

methodology that does not aim to replace professional

judgment, but to enhance it, making auditing more

continuous, adaptive and capable of learning. A methodology

that, consistently with the conceptual framework

presented here, interprets control not as a rigid

constraint, but as a living process of regulation and

system growth.

THANK YOU FOR ACCESSING

Auditing & Security Links

- Publishing Note -

IS-auditing.net 2026 Sergio Rubichi — All rights reserved.

Website contents are protected by copyright

Reproduction allowed only with source attribution